Speech Recognition (In-car voice recognition system)

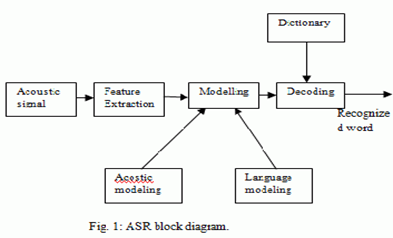

What is Speech Recognition?

- Speech recognition is a process of converting spoken words into text.

- It is also known as Automatic Speech Recognition (ASR).

- The technology gained acceptance and shape in early 1970s due the research funded by Advance Research Project Agency in U.S Department of Defense .

- It has been widely in use since 1970s in various domains such as automotive industry, health care, military, IT support centers, telephone directory assistance, embedded applications, automatic voice translation into foreign languages etc.

It consists of:

At the heart of the software we have the translation part:

Classification of Speech Recognition Systems

Factors affecting accuracy of speech recognition systems

Acoustic Modeling

- A microphone, for the person to speak into.

- Speech recognition software.

- A computer to take and interpret the speech.

- A good quality sound card for input and/or output.

At the heart of the software we have the translation part:

- Breaks down the spoken words into phonemes

- “Phonemes” are analyzed to see which units fits best, which can be derived from its dictionary

- System has to be trained to recognize factors associated with the users voice e.g.. Speed, pitch

Classification of Speech Recognition Systems

- Types of Speech Utterance

- Isolated Words

- Connected Words

- Continuous Speech

- Spontaneous Speech

- Types of Speaker Model

- Speaker dependent models

- Speaker Independent models

- Types of Vocabulary

- Small vocabulary –tens of words

- Medium vocabulary –hundreds of words

- Large vocabulary –thousands of words

- Very-large vocabulary –tens of thousands of words

- Out –of –Vocabulary –Mapping a word from the vocabulary into the unknown world

Factors affecting accuracy of speech recognition systems

- Vocabulary size

- Speaker dependence vs. independence

- Isolated, Discontinuous or continuous speech

- Language constraints e.g.: "Red is apple the.“

- Read vs. Spontaneous Speech

- Adverse conditions

Acoustic Modeling

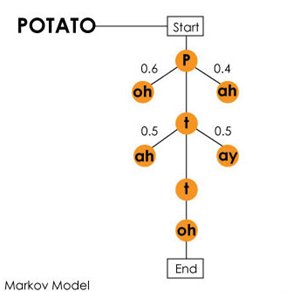

- Establishing statistical representations for the feature vector sequences computed from the speech waveform.

- Pronunciation modeling. How fundamental speech units represent larger words.

- Feedback information - reshapes the speech vectors and gives noise robustness.

- Taking audio recordings of speech and their transcriptions and then compiling them into statistical representations of the sounds for words.

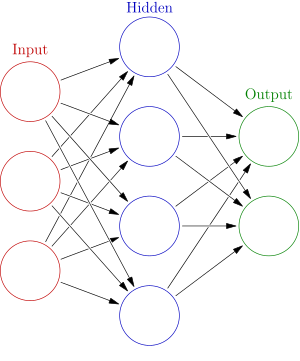

- E.g.: HMM, Neural Networks.

Language Modeling

- Probabilities of sequences of words.

- Provides context to distinguish between words and phrases that sound similar. e.g “recognize speech” and “Wreak a nice beach”

- Offers grammatical support for the words processed E.g.: “The milk is the cat”

Boom and the Technology

- Hidden Markov Models, n-gram models and neural networks are widely used in speech recognition systems.

- Since 2010, deep neural networks are being used for learning algorithms to train the system

- A survey by Statista shows the twenty percent growth in cars with speech recognition system installed in 2012 and 2019

- The share of voice recognition system equipped cars is predicted to be increased from 37 percent in 2012 to 55 percent in 2019 .Read more on http://www.statista.com/statistics/261685/voice-recognition-in-new-cars/

Deep delve into the technology

Language Models

- N-gram model -is a contiguous sequence of n items from a given sequence of text or speech.

- Unigram model –an n-gram of size 1 is referred to as “unigram”.

In-car speech recognition

Contemporary Speech recognition systems (in car)

Speech recognition (a solution and a cause of Driver Distraction)

In contrast

Research by AAA

- “Speech Interactive mode” is interaction with the car.

- Automotive industry fast paced to come up with efficient systems to perform the below in all upcoming cars:

- Hands-free use of mobile phone handsets in the car e.g. “Dial office”

- Speech instructions to navigation systems e.g. GPS-connected digital maps: “How far is it from highway?”

- In-car system interaction e.g. “Turn on the radio to the travel reports channel.”

- In-car steering systems – leading to the concept of “Autonomous” cars .

Contemporary Speech recognition systems (in car)

- Dragon NaturallySpeaking

- Android SDK

- Apple Car plays

- Google APIs

- CMU Sphinx

- Toyota’s Entune system

- Chevrolet’s My Link system

- Chrysler’s Uconnect system

- Hyundai’s Blue link system

- Mercedes’s Command system

Speech recognition (a solution and a cause of Driver Distraction)

- Most of the people hold a perception that speech recognition technology is safer because the driver does not need to take eyes off the road or hands off the steering wheel.

- Speech recognition is assumed to be a panacea for driver distraction offering an alternative to the visual/manual demand of the system thus;

- Use of handsets while driving is illegal in 14 states

- Use of “hands-free” voice controls is being encouraged

In contrast

- Speech-based systems can demand attention resulting in increased cognitive load and distracting driver just as visual displays and manual controls do(A comparison of Ten 2015 In-Vehicle Information Systems). Read more on https://www.aaafoundation.org/sites/default/files/strayerIII_FINALREPORT.pdf

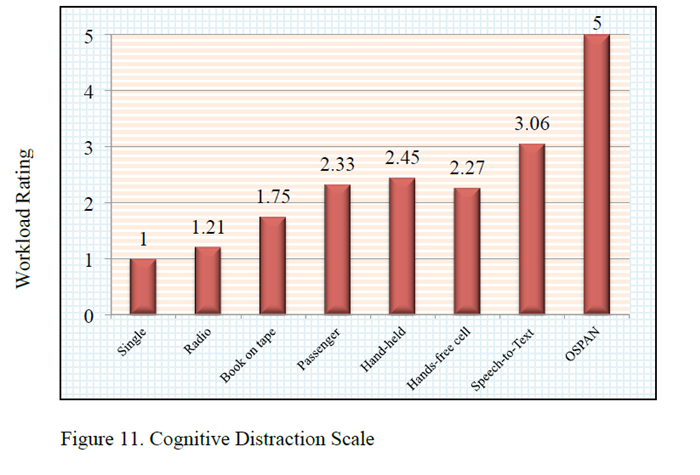

- The study by AAA foundation in June 2013 showed that the mental workload from performing complicated tasks slows reaction times, whatever the driver is doing with his or her hands. Read more on https://www.aaafoundation.org/sites/default/files/MeasuringCognitiveDistractions.pdf

Research by AAA

More research and findings

Latest research says

Speech to text-most cognitive distracting(AAA foundation for traffic safety-2011)

- Speech interface requires cognitive demand, which can interfere with the primary driving task .Read more on http://umich.edu/~driving/publications/LoIJVT2013.pdf

- Research analyzed the difference in reaction time, when no email system is provided in car with the reaction time when provided with speech based in-vehicle email system. The results showed 30% increase in reaction times when used speech-based system. Read more on http://journalistsresource.org/studies/environment/transportation/distracted-driving-voice-activated-systems-reaction-times and http://user.engineering.uiowa.edu/~csl/publications/pdf/leecavenhaakebrown00.pdf

- In cars, the environment has lot of disturbances, which makes speech recognition system inefficient. Read more on http://www.bcs.org/upload/pdf/ewic_hci09_paper59.pdf

Latest research says

- In last 2014, A J.D. Power and Associates executive announced that automotive voice-recognition technology should receive a “failing grade”. Read more on http://www.pcmag.com/article2/0,2817,2462627,00.asp

- Unlike voice recognition on portable devices, the technology has to contend with lots of road and engine noise inside a moving car hence not upto the mark. Read more on http://erskine-mcmahon.com/files/cell.phone.driving.distraction.article.10.pdf

- Cognitive work-load increases leads to distracted brain. Read more on https://www.aaafoundation.org/sites/default/files/strayerIII_FACTSHEET.pdf

Speech to text-most cognitive distracting(AAA foundation for traffic safety-2011)

Future analysis and take on Speech recognition in cars

- Using speech recognition and ADAS together. Read more on :

Copyright © 2015. Arizona State University